Defragging stacks all the files next to one another in contiguous space on the drive, but as Ashwin said, as soon as you do ANYTHING, the process begins again. At that point the drive will become really slow as the heads have to fly all over the platter to gather up the bits in the holes. Over time, those holes will take a lot of space and when the drive starts to get full an a really large file needs more than any single free space, it will be written into all those holes. Eventually the hole will be too small for anything and will be left there. Next time a file wants storing, that "hole" will be considered, and if the file fits into it, it will go there, leave a smaller "hole" there. Assuming this is a well written app, then it will go back and erase the old version of the file, leaving THAT space now free. If it finds one, it will write that document into that space. In a lot of cases, many bad habits were picked up by DOS and Windows users that have made it way into the Mac/Unix land which is very unnecessary.Īlso, as Ashwin explained, when a file needs to be written then the OS first looks for a single free space where the document will fit. So JUST because it has been done by everyone for countless numbers of years, doesn't necessarily mean there is value in it or that it is necessary with the latest technologies. With SSDs, there is no spinning media or head that is being physically moved around and as such the reads are not affected by any level of fragmentation as long as all the bits are properly known. However, this defragmented state stays for a short while before the fragmentation happens again with normal use. This has the advantage of making the head not seek as much and ability to read the file quickly and present it. The process of defragmentation reads the FAT or inode table and takes all the pieces and aligns them in as much of a consecutive manner as possible.

On Unix based filesystems, the inode table serves a similar purpose.Įither way, with spinning media, when the files begin to get scattered a lot, the head has to do a lot of seeking to find all the components to present the full file for consumption by applications. On Microsoft filesystems, the FAT (File Allocation Table) is used to track all of these pieces as it pertains to a file. The file is split into many parts and scattered around the drive.

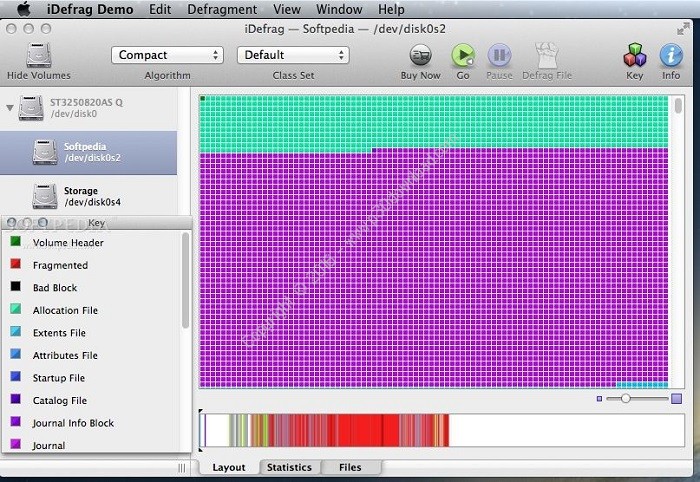

When files are stored on permanent storage, the pieces are seldom stored in a consecutive manner. Well, let's take a look at how this all works. I look forward to some help, as my work is halted until I finish defragging some drives, and I'm wondering if I need to budget 30 hours of computer downtime per 650GB of hard drive defragging :-| Model: ThunderBolt 2 Dock ALSO - Regarding upgrading from iDefrag 1.7.3 to 2.2.8 (per Corialis website suggestion for OS10.9.5): a. Model: Expansion Portable HDD (STEA4000400)Ĭonnection: Seagate's USB 3 Elgato USB 3 Dock/Hub Skip files that cannot be defragmented due to lack of free space: SELECTED/CHECKED ComputerĬonnection: MacBook Pro ThunderBolt 1 Elgato ThunderBolt 2 External drive being defragged/optimized I'm wondering: Does this mean I have another 15+ hours left to wait for this, and if so, is this normal/OK for iDefrag to take some 15-30 hours to optimize 650GB on a 4TB drive?!Įnable per-class sorting: SELECTED/CHECKED I recently upgraded my OS from 10.7 to 10.9.5, and I already had iDefrag 1.7.3 (229), which I'm using now.Īs we speak, I'm about 15+ hours into a iDefrag Optimize on a 4TB external drive that only has 650GB used, and the Location Indicator is barely over halfway through the populated area of the Whole Disk Display. Hi MF community! I hope someone might be able to advise me here.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed